Data Management explained

Data is the information that drives business. It can be structured in rows and columns, like a customer name, address, and phone; and it can be unstructured, such as an email or a social media post. Structured data is what is populated in Relational Database Management Systems such as those created by Oracle, IBM and Microsoft, and open-source PostgreSQL and MySQL, among others. That data can be accessed using the standard Structured Query Language (SQL). Unstructured data resides in what are called NoSQL databases, such as Cassandra, Couchbase, MongoDB and many, many others. Many organizations today run both kinds of databases.

Once the data is stored, it must be easily retrievable, found amid the mountains of data organizations collect, and made available at scale. Numerous tools exist for those jobs, including Hadoop, Apache Spark and many more. It is through the collection and analysis of data that businesses can make decisions that affect their bottom line.

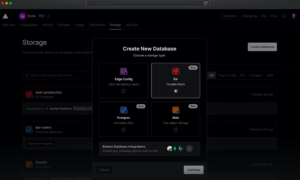

Vercel introduces a suite of serverless storage solutions

Vercel announced its suite of serverless storage solutions: Vercel KV, Postgres, and Blob to make it easier to server render just-in-time data as part of the company’s efforts to “make databases a first-class part of the frontend cloud.” Vercel KV is a serverless Redis solution that’s easy and durable, powered by Upstash. With Vercel KV, … continue reading

InfluxDB 3.0 released with rebuilt database and storage engine for time series analytics

InfluxDB announced expanded time series capabilities across its product portfolio with the release of InfluxDB 3.0, the company’s rebuilt database and storage engine for time series analytics. “InfluxDB 3.0 is a major milestone for InfluxData, developed with cutting-edge technologies focused on scale and performance to deliver the future of time series,” said Evan Kaplan, CEO … continue reading

Slack’s new platform makes it easier for developers to build and distribute apps

Slack has launched its next-generation platform with new features and capabilities to make it easier for developers to build and distribute apps on the Slack platform. The platform includes modular architecture grounded in building blocks like functions, triggers, and workflows. They’re remixable, reusable, and hook into everything flowing in and out of Slack. It also … continue reading

UserTesting announces friction testing capability

UserTesting announced machine learning innovations to the UserTesting Human Insight Platform to help businesses gain the context needed to understand and address user needs. One update is friction detection powered by machine learning to visually identify moments in both individual video sessions, and across multiple videos, where people experience friction behaviors like excessive clicking or … continue reading

Android updates data deletion policy to provide more transparency to users

Google announced a new data deletion policy to provide users with more transparency and authority when it comes to managing their in-app data. Developers will soon be required to include an option in their apps for users to initiate the process of deleting their account and associated data both within the app and online on … continue reading

Google now shows datasets in search results

The new ability to see datasets in search results is aimed at helping scientific research, business analysis, or public policy creators get access to data quickly, according to Natasha Noy, research scientist, and Omar Benjelloun, software engineer at Google Research in a blog post. Google search engine users can click on any of the top … continue reading

Talend Winter ‘23 release introduces cloud migration capabilities

Data integration company Talend has announced updates to Talend Data Fabric, which is an end-to-end platform for data discovery, transformation, governance, and sharing. The Winter ‘23 release adds capabilities for automating cloud migrations and data management, expanding data connectivity, and improving data visibility, quality, control, and access. To ease migrations, Talend has added the ability … continue reading

Don’t let data compliance block software innovation; automation is the key

The need for the digital transformation of business processes, operations, and products is nearly ubiquitous. This is putting development teams under immense pressure to accelerate software releases, despite time and budget constraints. At the same time, compliance with data privacy and protection mandates, as well as other risk mitigation efforts (e.g., zero trust), often choke … continue reading

Developers have to keep pace with the rise of data streaming

The rise of data streaming has forced developers to either adapt and learn new skills or be left behind. The data industry evolves at supersonic speed, and it can be challenging for developers to constantly keep up. SD Times recently had a chance to speak with Michael Drogalis, the principal technologist at Confluent, a company … continue reading

Zero-Copy Integration standard made available to public

The Digital Governance Council and Data Collaboration Alliance have announced the public release of Zero-Copy Integration, which is a Canadian standard that provides a framework for meeting strict data protection regulations and dealing with the risks related to data silos and copy-based data integration. The Zero-Copy Integration framework establishes the following principles: data management via … continue reading

Data quality can save money, improve customer satisfaction

The effects of rampant inflation are being felt by marketing professionals. But investments in data quality can deliver cost savings and more effectiveness to organizations. According to Greg Brown, VP of global marketing at Melissa, ““Data quality touches every aspect of business, making it one of the most successful areas to shave dollars off the … continue reading

Google announces innovations in privacy-enhancing technologies

Google is continuing its work to keep personal data safe with the announcement of new privacy-enhancing technologies (PETs). Over the last decade the PETs it has invented include Federated Learning, Differential Privacy, and Fully Homomorphic Encryption (FHE). From the company’s standpoint, this allows them to protect users’ personal data while also continuing to provide the … continue reading